Load Flexibility’s Breakthrough Moment—Driven by Data Centers

How AI demand is driving unprecedented interest in flexible loads, distributed resources, and interactive grid infrastructure

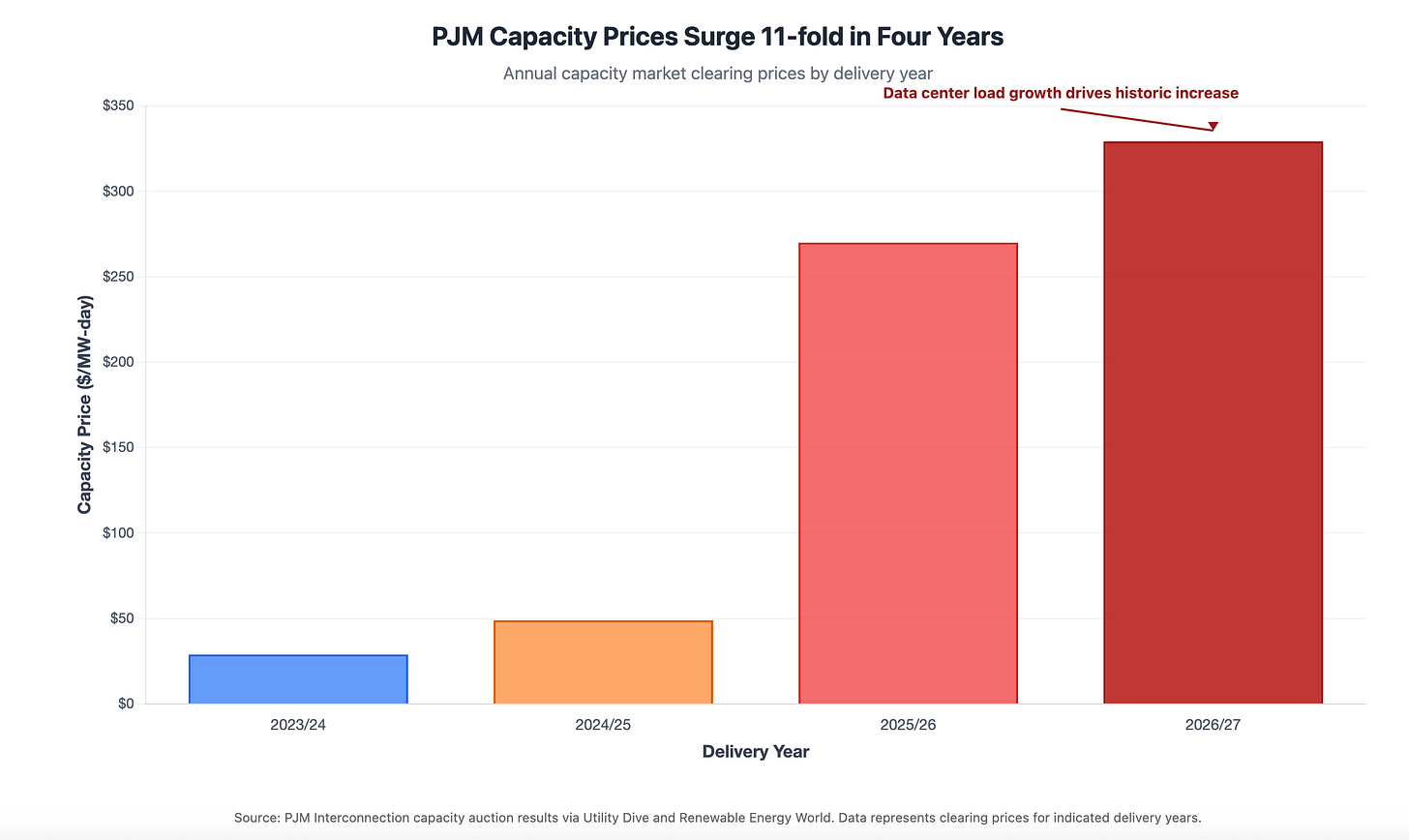

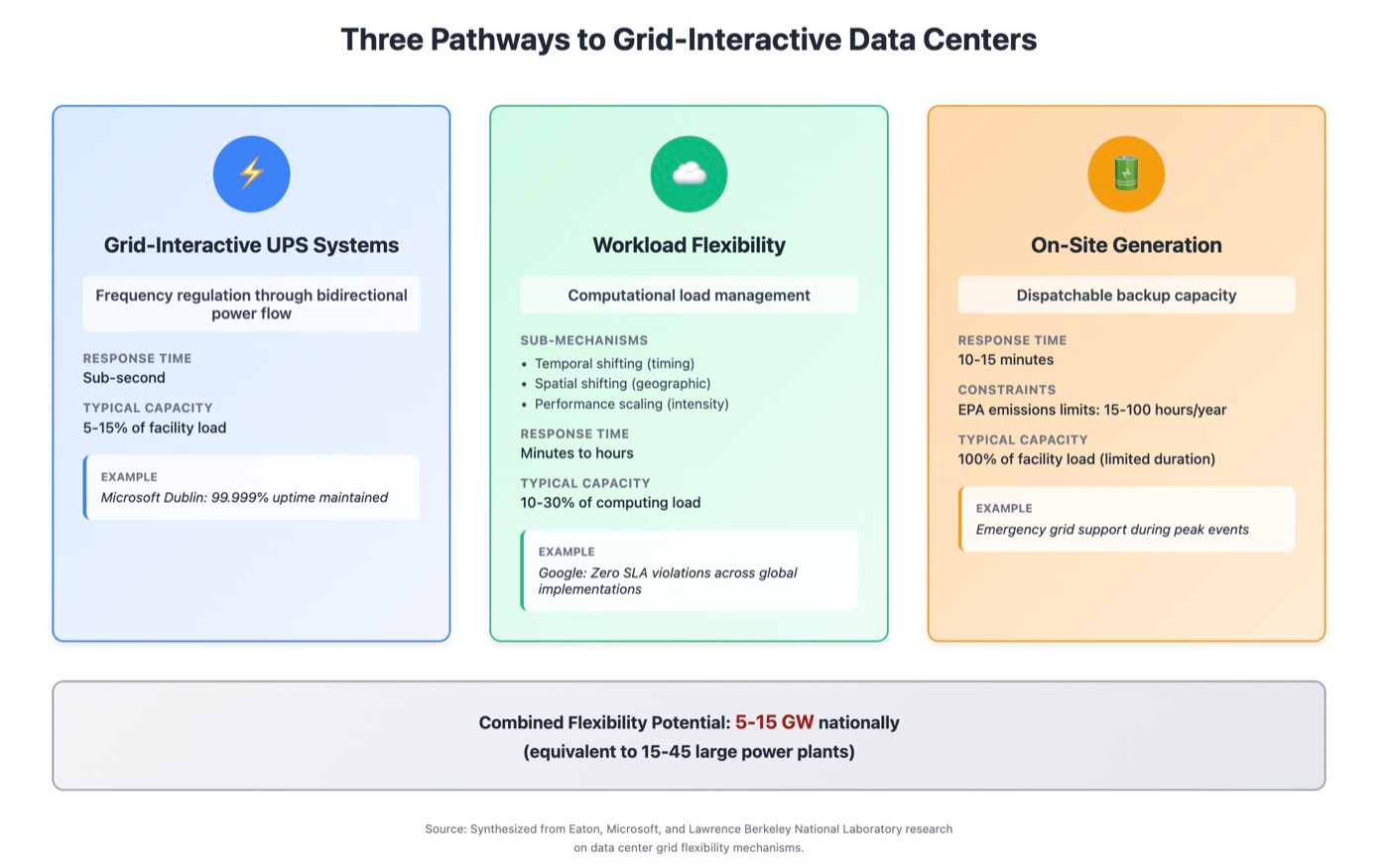

Data centers are transforming from passive power consumers into active grid participants. U.S. data center capacity will add 65 GW through 2029²⁵—and this growth unlocks a strategic opportunity. The industry can now provide 5-15 GW of dispatchable grid resources through strategic curtailment—equivalent to 15-45 large power plants—while simultaneously meeting unprecedented electricity demand. This transformation fundamentally reshapes how critical computing infrastructure supports grid reliability. Consider the stakes: annual capacity market costs in PJM have surged $14.7 billion for the 2026/27 delivery year—a 11-fold increase that demonstrates both the crisis and the opportunity.

AI-driven power demand, aging grid infrastructure, and advanced control technologies have converged to enable data centers to provide interactive grid services without compromising operational requirements. While Tier IV facilities target 99.995% uptime—the industry’s most stringent standard—most cloud and AI workloads achieve reliability through geographic redundancy rather than individual site availability. This architectural approach unlocks flexibility impossible for traditional colocation or enterprise data centers. Google, Microsoft, and other hyperscalers have already proven technical feasibility through major implementations, while electricity markets develop new compensation mechanisms worth billions annually.

Record electricity demand creates urgent flexibility needs

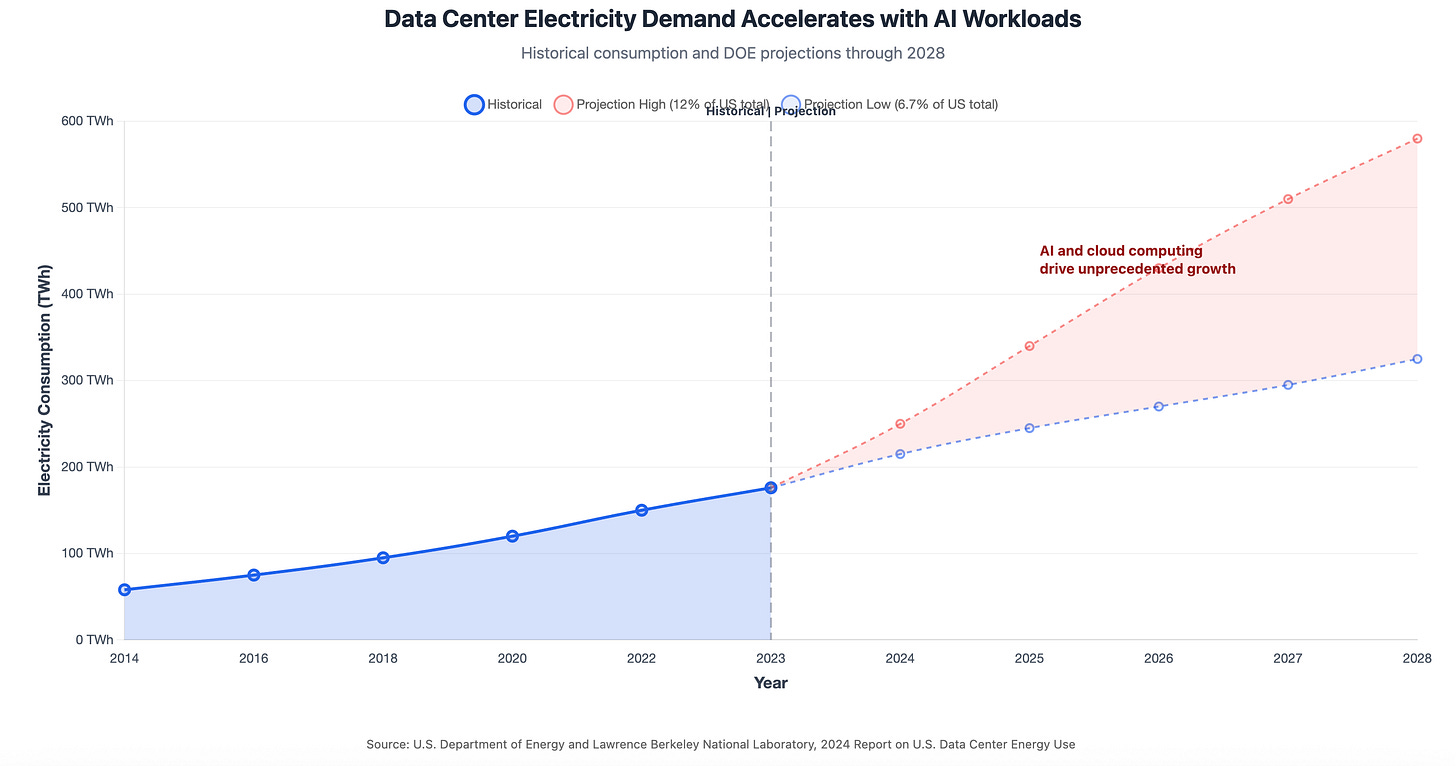

US electricity demand is surging after nearly two decades of stagnation. Peak demand hit 745,020 MW in July 2024, shattering previous records¹, with data centers driving the majority of growth. Projections show 25% demand increase by 2030²—a fundamental reset of grid planning assumptions.

Data center electricity consumption tells the story most dramatically. The sector consumed 4.4% of US electricity in 2023 but will reach 6.7-12% by 2028 as AI workloads demand substantially more power than traditional computing³.

This surge coincides with dual-peaking patterns emerging across multiple grid regions from electrification of heating and transportation.

Grid reliability margins are shrinking fast. Over half of North America faces potential electricity supply shortfalls within the next decade⁴. NERC’s 2024 assessment projects significant dispatchable generation retiring over the next 10 years, while new generation and transmission projects require 5-8 year development timelines. The grid needs flexible demand resources that can deploy within months, not years.

The economics underscore the urgency. PJM Interconnection’s capacity market prices surged from $28.92/MW-day to $329.17/MW-day for 2026/27 delivery⁵—an 11-fold increase largely driven by data center load growth.

This translates to billions in annual cost increases that demand flexibility programs can partially offset.

Data centers possess unique interactive grid capabilities

Data centers maintain sophisticated power infrastructure with redundant systems that create inherent flexibility—capabilities traditional industrial loads lack⁶. Advanced control systems, existing energy storage through UPS batteries, and high power density enable rapid response to grid signals without compromising mission-critical operations.

The technical differentiation starts with bidirectional power flow through grid-interactive UPS systems. Microsoft’s Dublin data center demonstrates how batteries simultaneously provide backup power and frequency regulation services⁷, with sub-second response times for grid stabilization. As analyzed in our comprehensive report on AI data center power and battery storage opportunities, these systems charge and discharge based on grid needs while maintaining full backup capability—with multiple revenue streams offsetting 40-60% of system costs over ten years.

Workload flexibility represents the most significant opportunity. Data centers achieve substantial power reduction through computational load management⁸: temporal flexibility (shifting computation timing), spatial flexibility (moving workloads between facilities), and performance scaling (reducing computation intensity during peak periods).

On-site backup generation adds another dimension. Data centers maintain diesel generators with substantial fuel storage, providing dispatchable capacity during emergencies. Recent regulatory clarifications now allow these assets to provide local grid support during certain emergency conditions, though EPA restrictions still limit broader market participation.

Advanced control systems enable real-time communication with grid operators through APIs and automated response protocols. Google’s implementations integrate with multiple ISOs using machine learning algorithms that optimize power-performance relationships while maintaining zero service level agreement violations⁹.

Barriers temper near-term deployment expectations

Technical capabilities are proven, but several constraints limit widespread adoption in the near term.

Operational complexity remains the primary barrier. Most facilities lack the advanced control systems hyperscalers deploy, and integrating real-time grid signals with mission-critical operations requires substantial engineering investment. Smaller operators face a chicken-and-egg problem: markets won’t compensate flexibility until it’s proven reliable, but proving reliability requires upfront investment without guaranteed returns.

Regulatory uncertainty stalls deployment. Backup generator participation in grid markets faces EPA emissions restrictions limiting annual operating hours to 15-100 hours depending on permit type and local air quality status. FERC Order 2222 theoretically enables DER aggregation, but implementation varies widely—PJM’s market rules remain under development three years after the order’s effective date, while ERCOT has no capacity market to participate in.

Baseline measurement challenges complicate compensation. How do grid operators verify a data center’s load reduction without access to proprietary workload data? Traditional demand response programs struggle with baseline gaming, where participants inflate normal usage to increase curtailment payments. Data centers’ highly variable computational loads make establishing credible baselines particularly difficult.

Workload type matters critically. AI inference requests (real-time queries requiring immediate response) offer minimal flexibility compared to training workloads that can be paused or shifted. As AI deployments mature from model development toward production inference, the flexibility window may narrow substantially. Google’s success with ML workload shifting may not translate to latency-sensitive applications dominating other operators’ facilities.

These barriers are surmountable, but they explain why grid-interactive deployments remain concentrated among sophisticated operators with resources to navigate complexity and absorb regulatory risk.